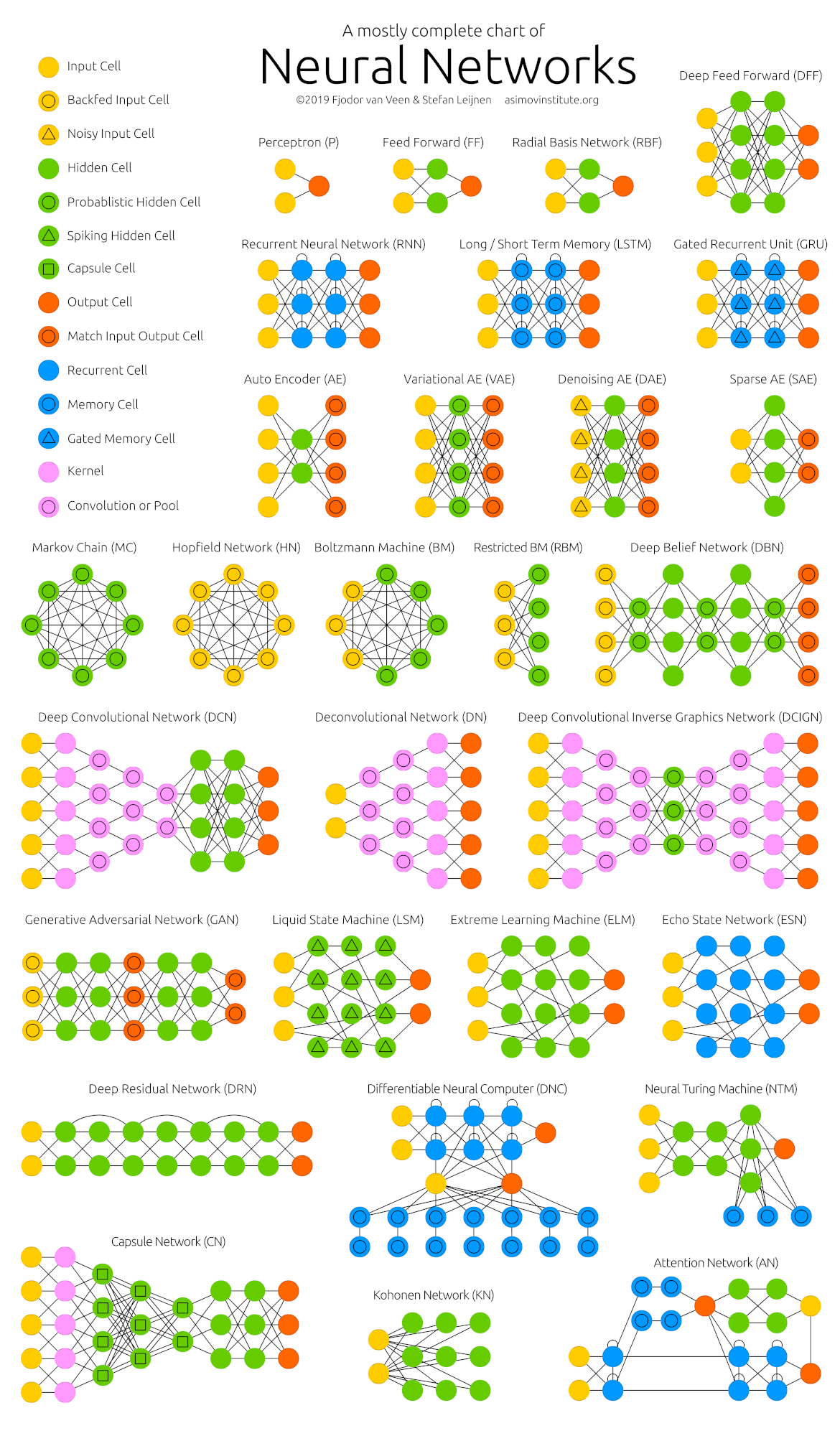

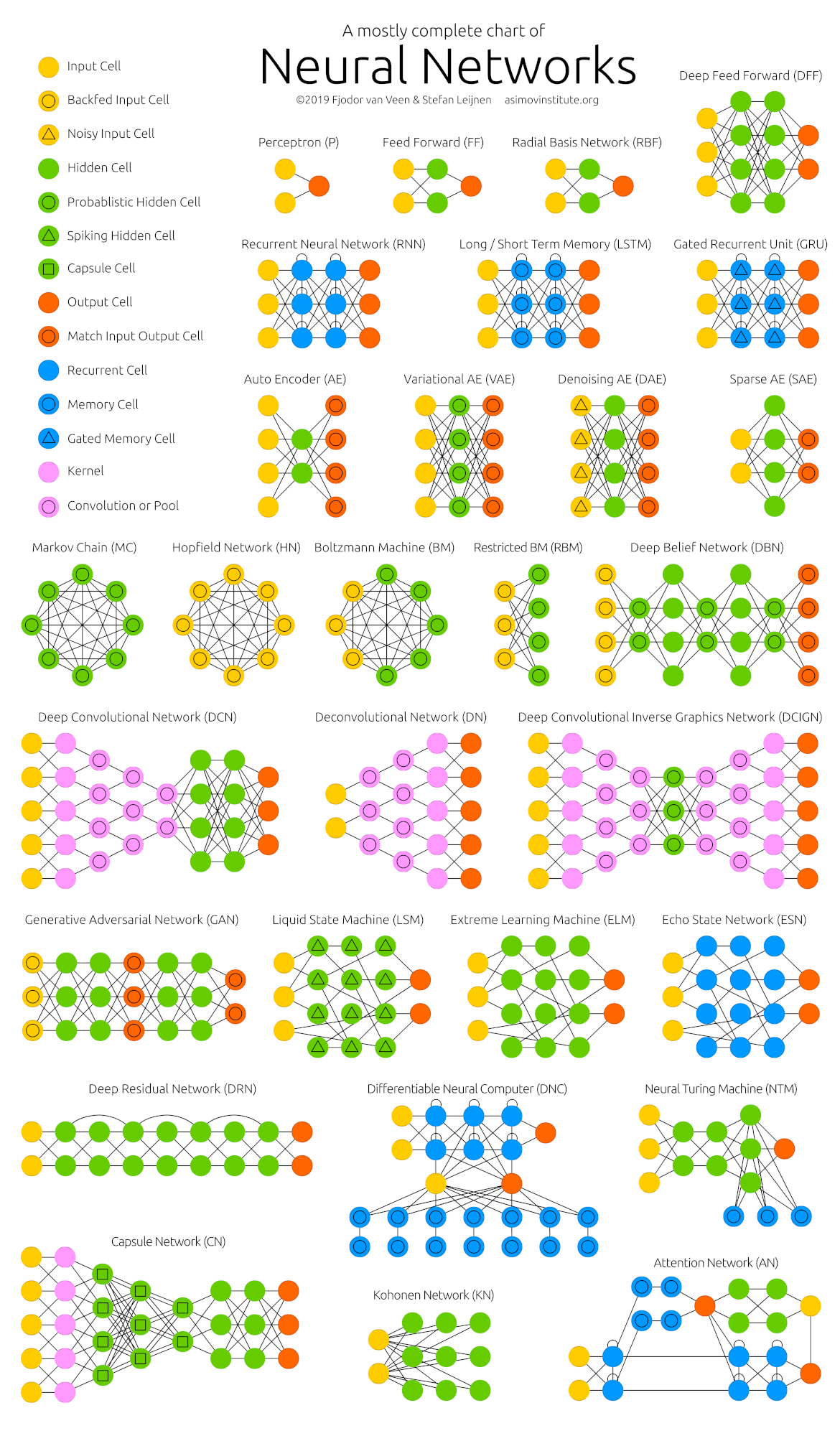

Click here to embed the full size image into this page.

... click on the image to open it in a new browser tab.

<think>

First I need to say that Neural Networks exist longer than you might think.. also used for OCR and more. LLMs are just one result of 'em.

I'm also still remembering Eliza

.. see also the

Documents and the Downloads.

And here are two other links, if you're interested:

Eliza Bot

ELIZA Archaeology Project

BUT don't expect too much!

These are only my own favorites.. there are others, too; but I don't really like using public versions.. only sometimes.

Gemini Google ChatGPT OpenAIHere's another Tip: NVIDIA offers Models/API-EndPoints/..,

also for free!

Just enable Free Endpoint

on this website: ...

These three are the apps I've already tested before.

LM Studio llama.cpp ollamaThese ones were not tested by me.. I've only heard about them. Maybe good?

Jan vllm msty mlx-lmAnd feel free to take a look at my Downloads ... w/ and .

I really like running LLMs on my local machine, or smth. not accessable by others. Even if I'm pretty sure, E.T./A.I. phone home... xD~

Open LLM LeaderboardHere are my favorite models (currently)

...

sorted by date/...randomly!

Here are some abliterated models @ Hugging Face (please explicitly search for 'em there).

It's about uncensoring LLMs ... the "easy" way (in a "global" form, not as usual by changing the prompt(s)). Find out more here:

I collected the interesting links (for myself) while surfing the web..

They aren't sorted in any priority or smth. like that ...

they're just sorted by date!

robots.txt) Unsloth Docs How Transformers Think: The Information Flow That Makes Language Models Work How LLMs Choose Their Words: A Practical Walk-Through of Logits, Softmax and Sampling A visual introduction to machine learning Model Tuning and the Bias-Variance Tradeoff Language models can explain neurons in language models Transformer Explainer Prompt caching: 10x cheaper LLM tokens, but how? [YouTube] Andrej Karpathy DreamGen What Is ChatGPT Doing … and Why Does It Work? Energy-based Hallucination detection How LLMs Work, Explained Without Math Visualizing machine learning one concept at a time. OWASP GenAI Security Project World Models Transformer Circuits Thread Let’s Build the GPT Tokenizer: A Complete Guide to Tokenization in LLMs Sebastian Raschka (not all articles for free!!) NVIDIA Models/API Tip: enable

Free EndpointGiles' blog

Here are interesting GitHub repositories that I forked. Only those which are related to artificial intelligence.

artificial-life autoresearch awesome-opensource-ai CL4R1T4S craftgpt guppylm llama2.c llama3pure llm-course llm-from-scratch llm.c mempalace nanochat remove-refusals-with-transformers system_prompts_leaks thunderboltI don't know how this is scaling in real, but the idea itself seems interesting!

See and it's GitHub repo.

... click on the image to open it in a new browser tab.